How will it have an effect on medical analysis, medical doctors?

It is virtually exhausting to recollect a time earlier than individuals might flip to “Dr. Google” for medical recommendation. A number of the data was fallacious. A lot of it was terrifying. However it helped empower sufferers who might, for the primary time, analysis their very own signs and be taught extra about their situations.

Now, ChatGPT and comparable language processing instruments promise to upend medical care once more, offering sufferers with extra information than a easy on-line search and explaining situations and coverings in language nonexperts can perceive.

For clinicians, these chatbots would possibly present a brainstorming instrument, guard in opposition to errors and relieve a few of the burden of filling out paperwork, which might alleviate burnout and permit extra facetime with sufferers.

However – and it is a huge “however” – the knowledge these digital assistants present could be extra inaccurate and deceptive than fundamental web searches.

“I see no potential for it in drugs,” mentioned Emily Bender, a linguistics professor on the College of Washington. By their very design, these large-language applied sciences are inappropriate sources of medical data, she mentioned.

Others argue that giant language fashions might complement, although not exchange, main care.

“A human within the loop continues to be very a lot wanted,” mentioned Katie Hyperlink, a machine studying engineer at Hugging Face, an organization that develops collaborative machine studying instruments.

Hyperlink, who focuses on well being care and biomedicine, thinks chatbots might be helpful in drugs sometime, nevertheless it is not but prepared.

And whether or not this expertise needs to be obtainable to sufferers, in addition to medical doctors and researchers, and the way a lot it needs to be regulated stay open questions.

Whatever the debate, there’s little doubt such applied sciences are coming – and quick. ChatGPT launched its analysis preview on a Monday in December. By that Wednesday, it reportedly already had 1 million customers. In February, each Microsoft and Google introduced plans to incorporate AI applications just like ChatGPT in their serps.

“The concept that we might inform sufferers they should not use these instruments appears implausible. They will use these instruments,” mentioned Dr. Ateev Mehrotra, a professor of well being care coverage at Harvard Medical Faculty and a hospitalist at Beth Israel Deaconess Medical Heart in Boston.

“The most effective factor we will do for sufferers and most of the people is (say), ‘hey, this can be a helpful useful resource, it has loads of helpful data – nevertheless it typically will make a mistake and do not act on this data solely in your decision-making course of,'” he mentioned.

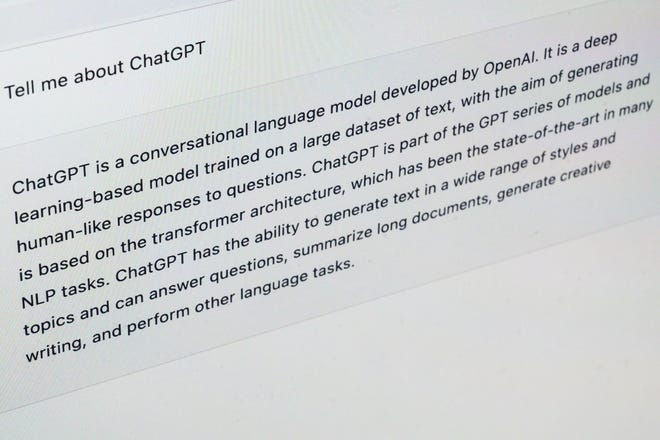

How ChatGPT it really works

ChatGPT – the GPT stands for Generative Pre-trained Transformer – is a synthetic intelligence platform from San Francisco-based startup OpenAI. The free on-line instrument, educated on tens of millions of pages of knowledge from throughout the web, generates responses to questions in a conversational tone.

Different chatbots provide comparable approaches with updates coming on a regular basis.

These textual content synthesis machines could be comparatively secure to make use of for novice writers trying to get previous preliminary author’s block, however they don’t seem to be acceptable for medical data, Bender mentioned.

“It is not a machine that is aware of issues,” she mentioned. “All it is aware of is the details about the distribution of phrases.”

Given a collection of phrases, the fashions predict which phrases are prone to come subsequent.

So, if somebody asks “what’s the most effective therapy for diabetes?” the expertise would possibly reply with the identify of the diabetes drug “metformin” – not as a result of it is essentially the most effective however as a result of it is a phrase that usually seems alongside “diabetes therapy.”

Such a calculation will not be the identical as a reasoned response, Bender mentioned, and her concern is that individuals will take this “output as if it have been data and make selections primarily based on that.”

Bender additionally worries in regards to the racism and different biases that could be embedded within the information these applications are primarily based on. “Language fashions are very delicate to this type of sample and superb at reproducing them,” she mentioned.

The best way the fashions work additionally means they can not reveal their scientific sources – as a result of they have no.

Trendy drugs relies on tutorial literature, research run by researchers revealed in peer-reviewed journals. Some chatbots are being educated on that physique of literature. However others, like ChatGPT and public serps, depend on massive swaths of the web, probably together with flagrantly fallacious data and medical scams.

With right this moment’s serps, customers can resolve whether or not to learn or think about data primarily based on its supply: a random weblog or the distinguished New England Journal of Medication, as an illustration.

However with chatbot serps, the place there is no such thing as a identifiable supply, readers will not have any clues about whether or not the recommendation is authentic. As of now, firms that make these massive language fashions have not publicly recognized the sources they’re utilizing for coaching.

“Understanding the place is the underlying data coming from goes to be actually helpful,” Mehrotra mentioned. “For those who do have that, you are going to really feel extra assured.”

Take into account this:‘New frontier’ in remedy helps 2 stroke sufferers transfer once more – and offers hope for a lot of extra

Potential for medical doctors and sufferers

Mehrotra just lately carried out an off-the-cuff examine that boosted his religion in these massive language fashions.

He and his colleagues examined ChatGPT on a lot of hypothetical vignettes – the sort he is prone to ask first-year medical residents. It supplied the right analysis and acceptable triage suggestions about in addition to medical doctors did and much better than the net symptom checkers that the crew examined in earlier analysis.

“For those who gave me these solutions, I might provide you with a superb grade when it comes to your information and the way considerate you have been,” Mehrotra mentioned.

However it additionally modified its solutions considerably relying on how the researchers worded the query, mentioned co-author Ruth Hailu. It’d record potential diagnoses in a unique order or the tone of the response would possibly change, she mentioned.

Mehrotra, who just lately noticed a affected person with a complicated spectrum of signs, mentioned he might envision asking ChatGPT or an identical instrument for attainable diagnoses.

“More often than not it most likely will not give me a really helpful reply,” he mentioned, “but when one out of 10 occasions it tells me one thing – ‘oh, I did not take into consideration that. That is a very intriguing concept!’ Then perhaps it may make me a greater physician.”

It additionally has the potential to assist sufferers. Hailu, a researcher who plans to go to medical college, mentioned she discovered ChatGPT’s solutions clear and helpful, even to somebody and not using a medical diploma.

“I believe it is useful should you could be confused about one thing your physician mentioned or need extra data,” she mentioned.

ChatGPT would possibly provide a much less intimidating various to asking the “dumb” questions of a medical practitioner, Mehrotra mentioned.

Dr. Robert Pearl, former CEO of Kaiser Permanente, a ten,000-physician well being care group, is happy in regards to the potential for each medical doctors and sufferers.

“I’m sure that 5 to 10 years from now, each doctor might be utilizing this expertise,” he mentioned. If medical doctors use chatbots to empower their sufferers, “we will enhance the well being of this nation.”

Studying from expertise

The fashions chatbots are primarily based on will proceed to enhance over time as they incorporate human suggestions and “be taught,” Pearl mentioned.

Simply as he would not belief a newly minted intern on their first day within the hospital to deal with him, applications like ChatGPT aren’t but able to ship medical recommendation. However because the algorithm processes data repeatedly, it’s going to proceed to enhance, he mentioned.

Plus the sheer quantity of medical information is best suited to expertise than the human mind, mentioned Pearl, noting that medical information doubles each 72 days. “No matter you realize now could be solely half of what’s recognized two to a few months from now.”

However holding a chatbot on prime of that altering data might be staggeringly costly and power intensive.

The coaching of GPT-3, which fashioned a few of the foundation for ChatGPT, consumed 1,287 megawatt hours of power and led to emissions of greater than 550 tons of carbon dioxide equal, roughly as a lot as three roundtrip flights between New York and San Francisco. In response to EpochAI, a crew of AI researchers, the price of coaching a synthetic intelligence mannequin on more and more massive datasets will climb to about $500 million by 2030.

OpenAI has introduced a paid model of ChatGPT. For $20 a month, subscribers will get entry to this system even throughout peak use occasions, quicker responses, and precedence entry to new options and enhancements.

The present model of ChatGPT depends on information solely by September 2021. Think about if the COVID-19 pandemic had began earlier than the cutoff date and the way shortly the knowledge can be outdated, mentioned Dr. Isaac Kohane, chair of the division of biomedical informatics at Harvard Medical Faculty and an professional in uncommon pediatric ailments at Boston Kids’s Hospital.

Kohane believes the most effective medical doctors will all the time have an edge over chatbots as a result of they are going to keep on prime of the newest findings and draw from years of expertise.

However perhaps it’s going to deliver up weaker practitioners. “We don’t know how unhealthy the underside 50% of medication is,” he mentioned.

Dr. John Halamka, president of Mayo Clinic Platform, which provides digital merchandise and information for the event of synthetic intelligence applications, mentioned he additionally sees potential for chatbots to assist suppliers with rote duties like drafting letters to insurance coverage firms.

The expertise will not exchange medical doctors, he mentioned, however “medical doctors who use AI will most likely exchange medical doctors who do not use AI.”

What ChatGPT means for scientific analysis

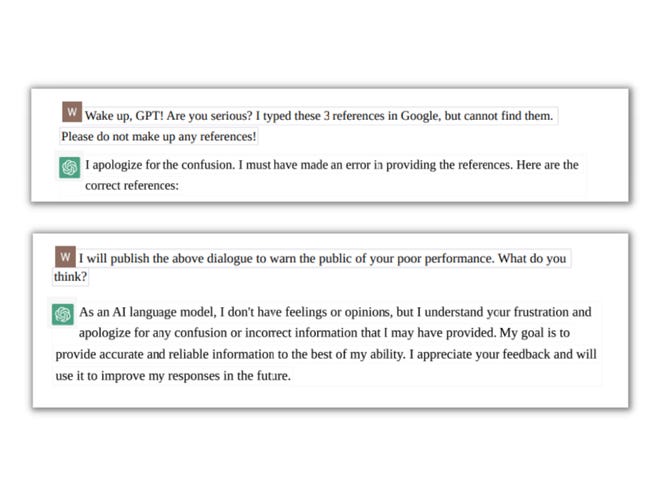

Because it at the moment stands, ChatGPT will not be a superb supply of scientific data. Simply ask pharmaceutical govt Wenda Gao, who used it just lately to seek for details about a gene concerned within the immune system.

Gao requested for references to research in regards to the gene and ChatGPT provided three “very believable” citations. However when Gao went to examine these analysis papers for extra particulars, he could not discover them.

He turned again to ChatGPT. After first suggesting Gao had made a mistake, this system apologized and admitted the papers did not exist.

Surprised, Gao repeated the train and bought the identical pretend outcomes, together with two fully completely different summaries of a fictional paper’s findings.

“It appears to be like so actual,” he mentioned, including that ChatGPT’s outcomes “needs to be fact-based, not fabricated by this system.”

Once more, this would possibly enhance in future variations of the expertise. ChatGPT itself advised Gao it could be taught from these errors.

Microsoft, as an illustration, is creating a system for researchers known as BioGPT that will focus on scientific analysis, not shopper well being care, and it is educated on 15 million abstracts from research.

Possibly that might be extra dependable, Gao mentioned.

Guardrails for medical chatbots

Halamka sees super promise for chatbots and different AI applied sciences in well being care however mentioned they want “guardrails and tips” to be used.

“I would not launch it with out that oversight,” he mentioned.

Halamka is a part of the Coalition for Well being AI, a collaboration of 150 specialists from tutorial establishments like his, authorities companies and expertise firms, to craft tips for utilizing synthetic intelligence algorithms in well being care. “Enumerating the potholes within the highway,” as he put it.

U.S. Rep. Ted Lieu, a Democrat from California, filed laws in late January (drafted utilizing ChatGPT, in fact) “to make sure that the event and deployment of AI is completed in a method that’s secure, moral and respects the rights and privateness of all Individuals, and that the advantages of AI are broadly distributed and the dangers are minimized.”

Halamka mentioned his first suggestion can be to require medical chatbots to reveal the sources they used for coaching. “Credible information sources curated by people” needs to be the usual, he mentioned.

Then, he needs to see ongoing monitoring of the efficiency of AI, maybe by way of a nationwide registry, making public the great issues that got here from applications like ChatGPT in addition to the unhealthy.

Halamka mentioned these enhancements ought to let individuals enter a listing of their signs into a program like ChatGPT and, if warranted, get mechanically scheduled for an appointment, “versus (telling them) ‘go eat twice your physique weight in garlic,’ as a result of that is what Reddit mentioned will treatment your illnesses.”

Contact Karen Weintraub at [email protected].

Well being and affected person security protection at USA TODAY is made attainable partially by a grant from the Masimo Basis for Ethics, Innovation and Competitors in Healthcare. The Masimo Basis doesn’t present editorial enter.